The next wave of design systems will be AI-driven

Could AI be the answer to my years of design system grievances?

I’ve worked with design systems for over 10 years and even as they’ve grown in sophistication, I see the same issues pop up time and time again.

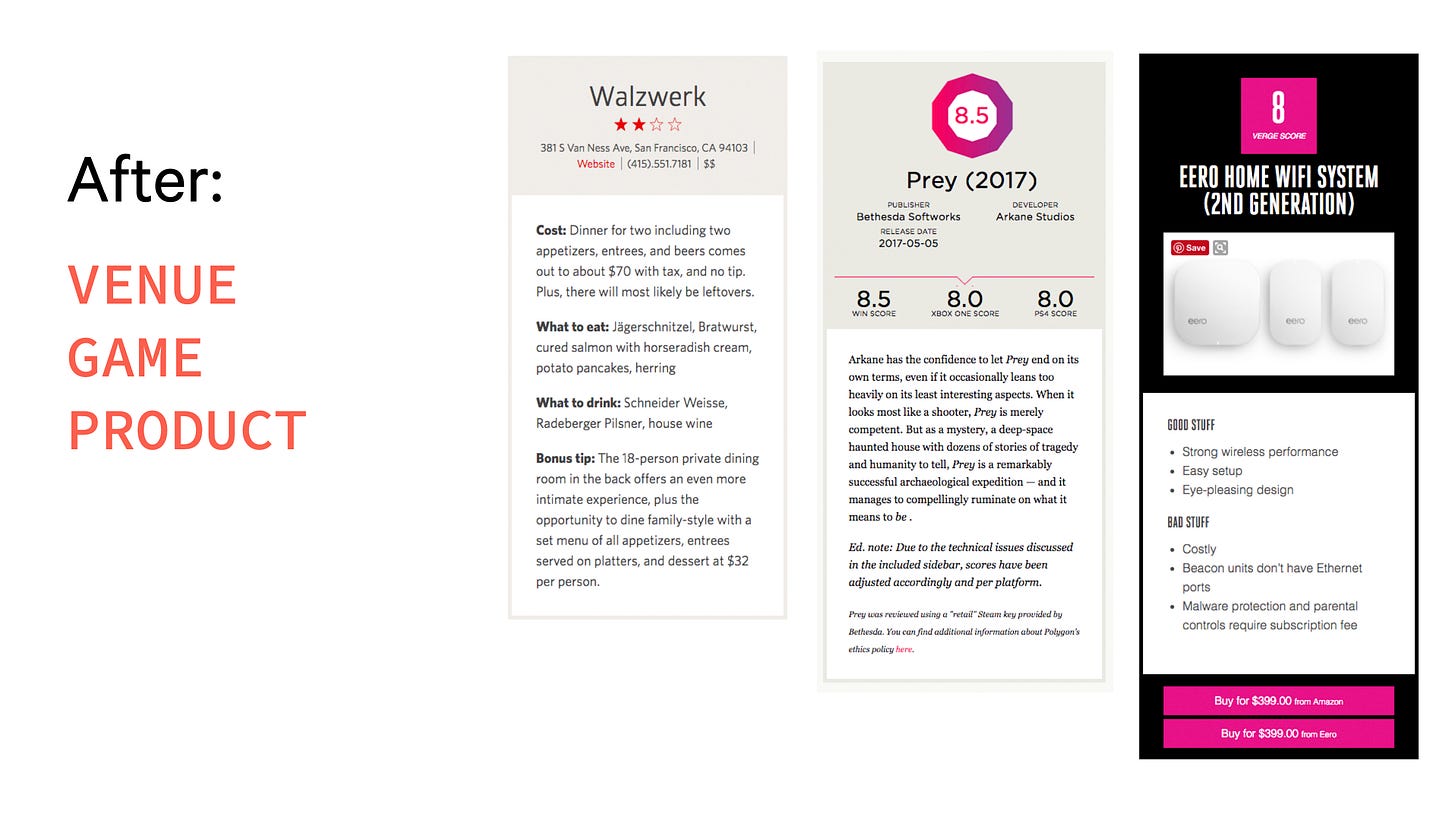

Sameness over cohesion. This is probably the biggest issue I’ve seen with design systems. They can lead to a feeling of visually generic sameness instead of a feeling of cohesion across an experience. Things look similar that conceptually aren’t similar or the reverse, things that are conceptually similar look different.

Assembly over purpose. This happens in part because designing with a system can relegate a designer to the role of an assembler, arranging system elements together on a screen. This isn’t the fault of the designer. It’s hard to know the bounds of a design system when you’re only seeing the small pieces instead of understanding how all the pieces should work together. Last year I posed this question:

Decisions are divorced from where work happens. Which gets me to the last issue: design system rationale is divorced from the place where designers and devs are working— in a separate documentation site. Not to mention the issue of whether Figma or code is the source of truth, the constant work to keep a Figma UI kit up-to-date with code, and the technical debt that can be created in the transition between Figma and code.

I’ve had several ideas for how these issues could be addressed over the years.

Scenarios

Six years ago, I talked about the idea of scenario-driven design systems. The idea is that unless you’re designing a UI library for broad, general usage like Tailwind, components should be specific instead of generic. An example I used was a “Game” card, “Venue” card, and “Product” card that highlighted the specific information users needed instead of a generic “Scorecard.” The first is an example of that visually generic sameness while the second shows how intentional variation can create conceptual clarity.

What I’ve learned since then is that specific components also have their short-comings. Once someone has a scenario that’s slightly different, they end up having to fork that component, which causes huge problems if you want to make a holistic design change because the forked components are no longer linked to the design system.

Levers and dials

Levers and dials were my answer to the question “how can you have a range of expression within one system?” The same company can show up differently across a range of applications, but still have recognizable core elements. You first have to define what those core elements are and then how they flex across a range of scenarios. Starbucks showcases this idea really well.

The issue I’ve found with both these ideas is that it’s hard to operationalize them easily because it requires a lot of explanation, instruction, and documentation. This is where I see huge advantages to design systems with AI.

The potential for AI in design systems

Instead of going back and forth between Figma and a documentation site to assemble screens to hand off to a developer who then builds those screens, what if design system users could just start with a scenario and get a recommended pattern built with the design system?

A type scale for high density data

A type scale for editorial with high size contrast

A modal that asks a user to acknowledge an action

A card that displays information about a customer

A dashboard for items that are advancing through a linear workflow

This would pull designers out from the depths of screen assembly and allow them to spend more time considering the strategic purpose of a UI. “What is a user trying to accomplish and what are the best ways to solve that user problem?” Rationale and recommended patterns could be baked directly into a designer’s workflow instead of on a separate documentation site. Even better if this was hooked up directly to live components eliminating the need to keep design and code components in sync. I’m really excited about the potential here to make design systems more effective.